Model = SentenceTransformer( 'sentence-transformers/all-MiniLM-L6-v2')Įmbedding_1= model.encode(sentences, convert_to_tensor= True)Įmbedding_2 = model. pip install -U sentence-transformersįrom sentence_transformers import SentenceTransformer, util You can also infer with the models in the Hub using Sentence Transformer models. Response = requests.post(API_URL, headers=headers, json=payload) The model will return scores according to the relevancy of these documents for the query.

The Passage Ranking model inputs are a query for which we look for relevancy in the documents and the documents we want to search. You can infer with Passage Ranking models using the Inference API. These models take one query and multiple documents and return ranked documents according to the relevancy to the query. The task is evaluated on Mean Reciprocal Rank. Passage Ranking is the task of ranking documents based on their relevance to a given query. You can find and use hundreds of Sentence Transformers models from the Hub by directly using the library, playing with the widgets in the browser or using the Inference API.

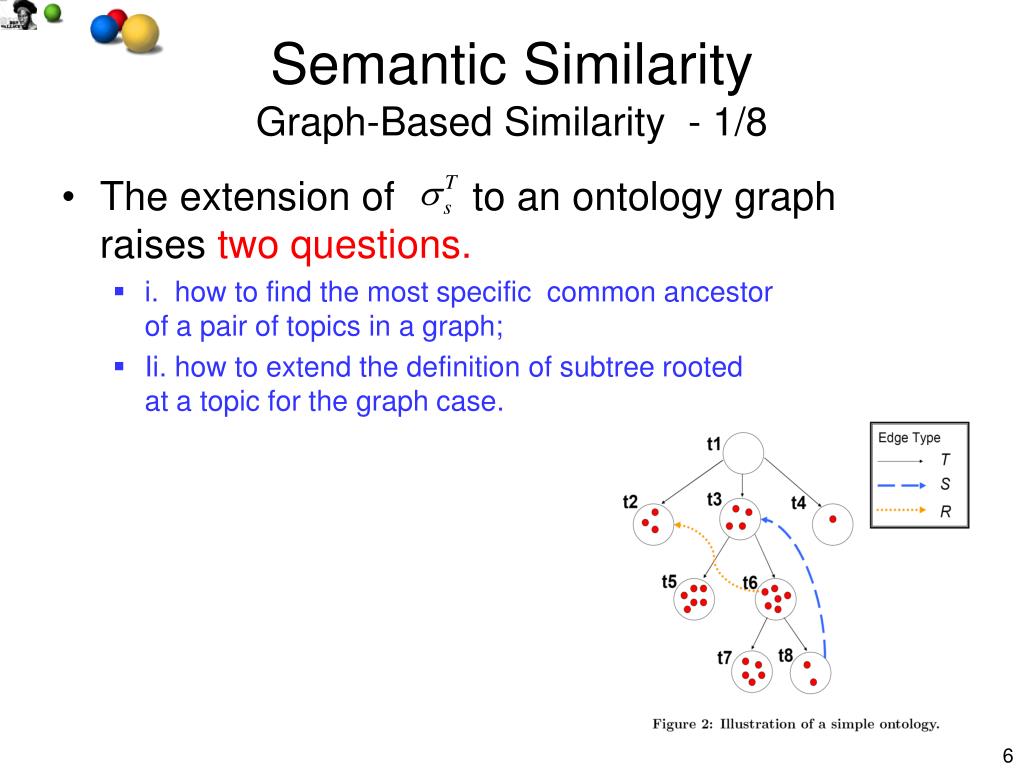

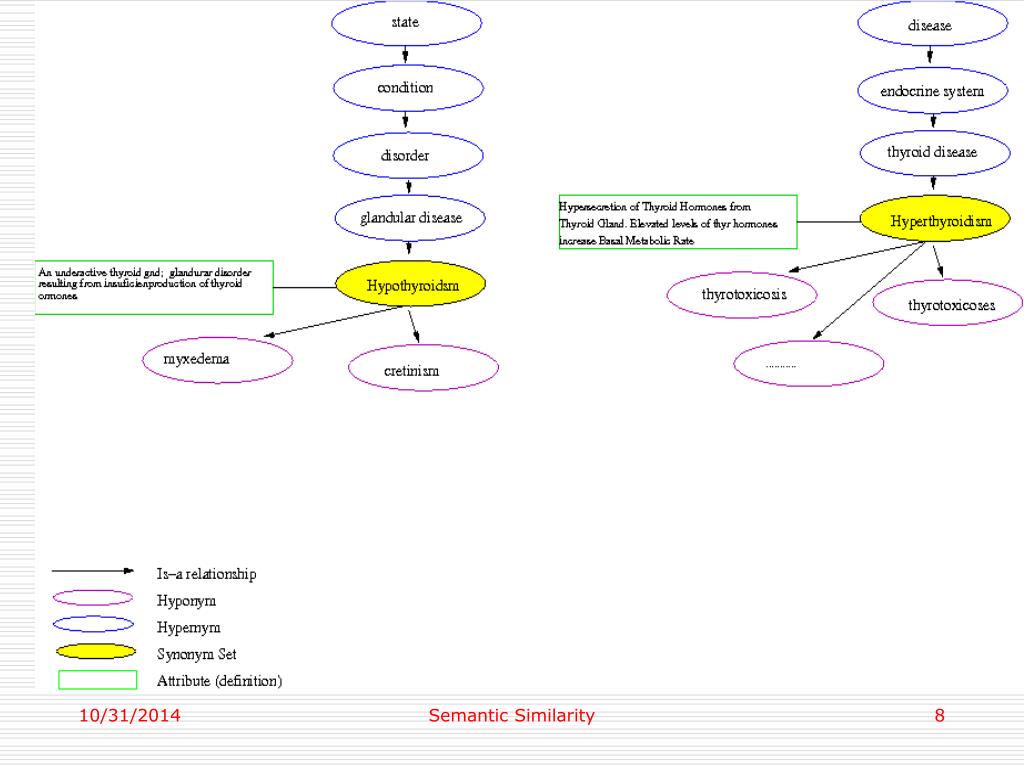

An embedding is just a vector representation of a text and is useful for finding how similar two texts are. The Sentence Transformers library is very powerful for calculating embeddings of sentences, paragraphs, and entire documents. You can then get to the top ranked document and search it with Sentence Similarity models by selecting the sentence that has the most similarity to the input query. The first step is to rank documents using Passage Ranking models. You can extract information from documents using Sentence Similarity models. The idea behind semantic similarity measurement is the notion that genes with similar function should have similar annotation vocabulary and have a close.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed